How Do You Handle Performance Optimization and Caching?

Performance optimization and caching are essential strategies in modern web development and system design. They ensure that applications run smoothly, respond quickly, and provide a seamless user experience even under heavy load. In this post, we will dive deeply into what performance optimization and caching entail, why they matter, and how to implement them effectively.

Understanding Performance Optimization

Performance optimization refers to the process of improving the speed and efficiency of your web application or system to reduce latency, increase throughput, and minimize resource consumption.

Key goals of performance optimization include:

- Reducing page load time

- Decreasing server response time

- Minimizing bandwidth usage

- Improving CPU and memory utilization

- Enhancing overall user experience

Common Causes of Performance Bottlenecks

Before optimizing, it’s crucial to identify where the bottlenecks are. Typical performance culprits include:

- Database query inefficiencies (e.g., missing indexes, N+1 query problem)

- Heavy or uncompressed assets like images, CSS, and JS files

- Slow server-side processing or expensive computations

- Redundant or blocking JavaScript operations

- Poor network latency and insufficient bandwidth

The Role of Caching in Performance Optimization

Caching is a powerful technique to temporarily store frequently accessed data or assets in faster storage media to reduce access times and computational overhead. It acts as a front-line defense to speed up data retrieval and system responsiveness.

“Caching is the single most effective tool to dramatically increase application performance and scale systems efficiently.”

Types of Caching

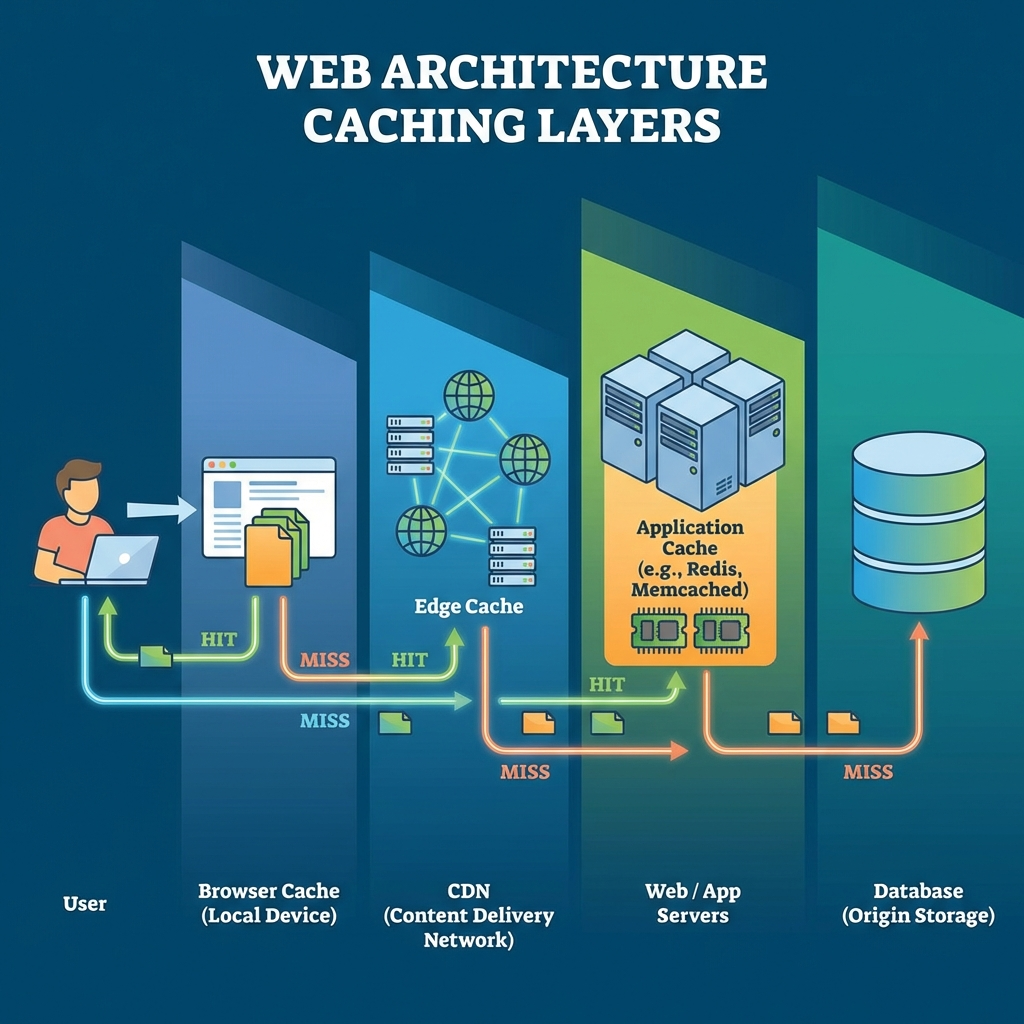

There are several classes of caching relevant to web performance optimization:

- Browser Caching: Storing static assets like images, CSS, and JS files on the client’s browser to avoid re-fetching on every visit.

- HTTP Caching: Using HTTP headers (Cache-Control, ETag, Last-Modified) to control how resources are cached across proxies and browsers.

- Content Delivery Network (CDN): Distributing cached versions of content globally to reduce latency.

- Server-side Caching: Caching database query results, API responses, or computed views on the server to avoid repeated expensive processing.

- In-memory Caching: Using technologies like Redis or Memcached to store frequently accessed data in RAM for lightning fast retrieval.

- Application-level Caching: Custom caches maintained within the application scope, such as memoization or local caching of function results.

Strategies for Effective Performance Optimization and Caching

1. Optimize Database Access

- Use indexes strategically to speed up queries.

- Analyze and rewrite expensive queries; avoid SELECT * statements.

- Implement query caching using server-side caches or built-in database features.

- Use connection pooling and minimize round trips.

2. Minimize Asset Size and Requests

- Compress images and use next-gen formats like WebP.

- Minify CSS, JavaScript, and HTML files.

- Use lazy loading and defer non-critical JavaScript.

- Combine multiple CSS/JS files to reduce HTTP requests (where appropriate).

3. Implement Efficient Caching Policies

- Set appropriate HTTP caching headers based on resource volatility.

- Use CDNs to cache and serve static content closer to users geographically.

- Cache whole page responses or partial fragments on server-side where possible.

- Expire caches intelligently following content updates.

4. Leverage Asynchronous Loading and Background Processing

- Load scripts asynchronously to avoid blocking rendering.

- Offload heavy computations or data fetching to background jobs or workers.

- Use web workers for intensive client-side processing.

5. Monitor and Profile Continuously

Optimization is an ongoing process. Using monitoring and profiling tools can help detect new bottlenecks and verify the effectiveness of caching strategies:

- Use browser dev tools, Lighthouse, or WebPageTest for client-side analysis.

- Implement APM (Application Performance Monitoring) tools like New Relic or Datadog.

- Analyze server logs and database metrics routinely.

- Continuously review cache hit/miss rates to tune policies.

Challenges and Best Practices

Cache Invalidation

One of the toughest challenges is deciding when and how to invalidate caches to avoid serving stale data. Stale data is particularly critical in dynamic applications where content changes frequently.

- Implement versioning for static assets to force updates without affecting existing caches.

- Use time-based expiration (TTLs) combined with event-driven invalidation where possible.

- For API and dynamic data caches, selectively expire based on user actions or data changes.

Balancing Cache Size and Freshness

Keeping very large caches may help reduce backend hits but consumes more memory and might hold outdated data longer. Balance is necessary:

- Limit cache sizes using LRU (Least Recently Used) or LFU (Least Frequently Used) eviction policies.

- Segment data by access frequency to prioritize caching strategy.

Security and Privacy Concerns

Be cautious about what data is cached, especially sensitive or personalized content. Improper caching can result in privacy leaks.

- Never cache sensitive information in shared caches like CDNs or browser caches if it can be avoided.

- Use secure and private caches with appropriate authentication.

- Employ HTTPS to prevent man-in-the-middle cache tampering.

Summary

Performance optimization and caching work hand-in-hand to deliver faster, more scalable, and user-friendly applications. By understanding the common bottlenecks, selecting appropriate caching types, and implementing disciplined caching strategies, you can greatly improve your system’s responsiveness and robustness.

Remember, optimization is iterative and requires continuous monitoring and adjustment. A well-architected caching layer not only boosts performance but also reduces infrastructure costs and enhances user satisfaction in the long run.